WASHINGTON — By now we've all heard that the Pfizer COVID-19 vaccine has an efficacy rate of 95%, Moderna of 94%, and Johnson & Johnson about 66%; what you may not have known is that rate's technical term, known as "relative risk reduction."

Some people online are suggesting we should instead look to another efficacy statistical method, called "absolute risk reduction."

Some posts point to this peer-reviewed paper, arguing if you look at absolute risk reduction instead of relative risk reduction, the risk of getting or dying from COVID-19 may only drop by a very small percentage if you get the vaccine.

So what does this other metric tell us?

What is absolute risk reduction and does it show the risk to be so much smaller than relative risk reduction?

We asked two experts in math and health, Dr. Stephen Kissler, who has a PHD in applied mathematics and is a postdoctoral research fellow at Harvard University T. H. Chan School of Public Health, and Dr. Ali Mokdad, who has a PHD in quantitative epidemiology and is a professor of global health at the Institute for Health Metrics and Evaluation at the University of Washington.

In the context of COVID, Kissler explains, absolute risk reduction compares how much your risk reduces by getting a vaccine.

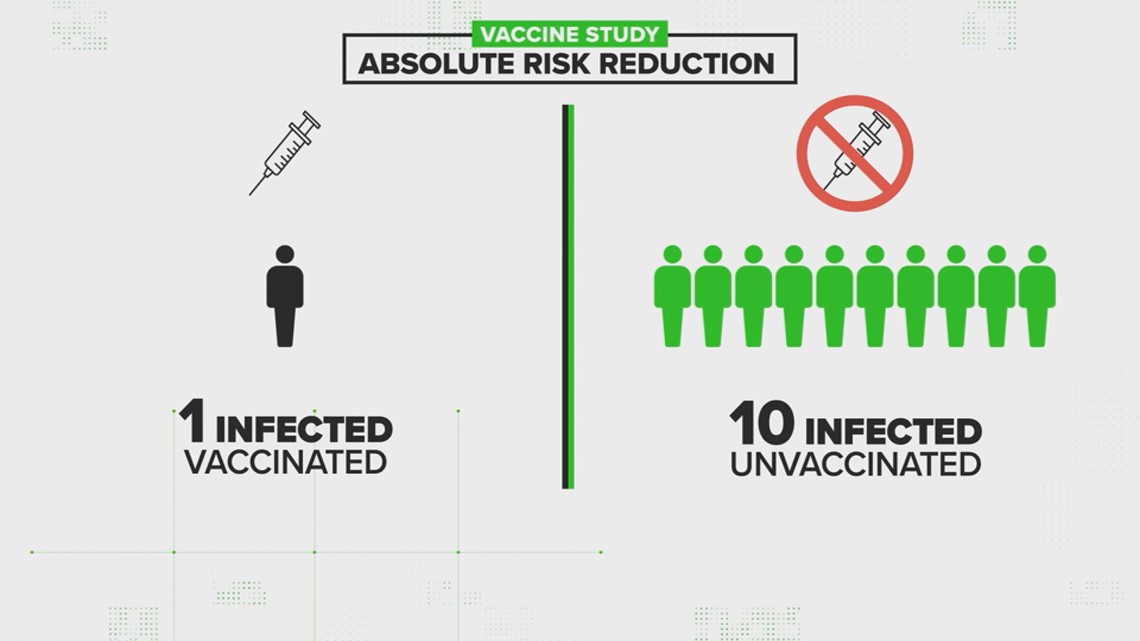

For example, let's say there's a vaccine study, where 100 people get the shot and 100 people get a placebo. In the vaccine group, just one person got sick, and in the placebo group, ten people got sick.

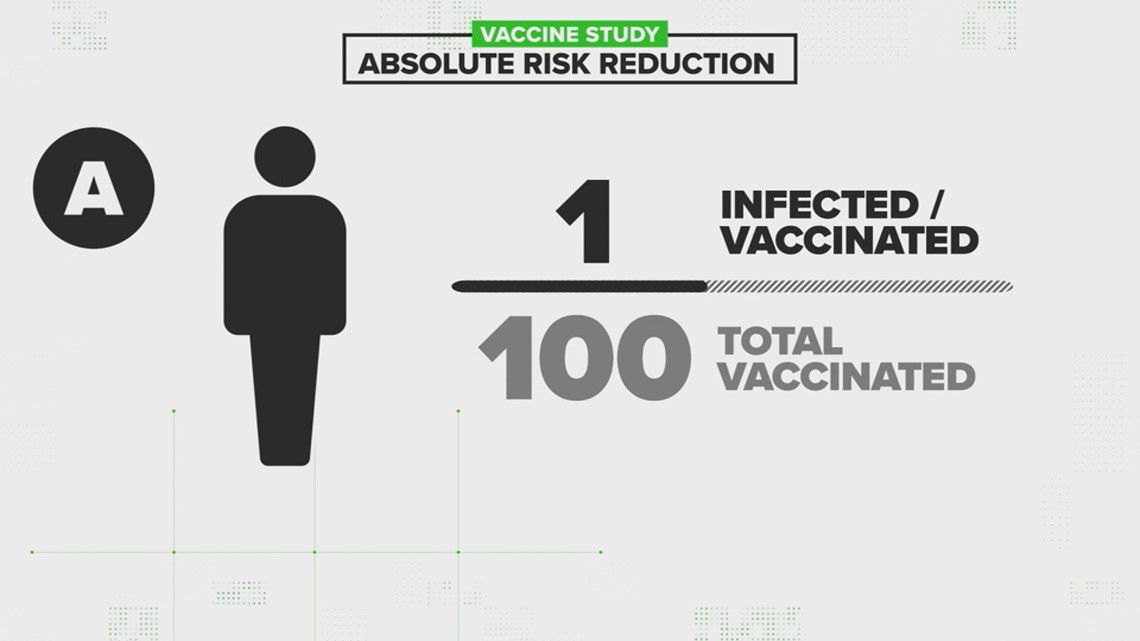

Let’s break it down for Person A, who got the vaccine, and Person B, who did not.

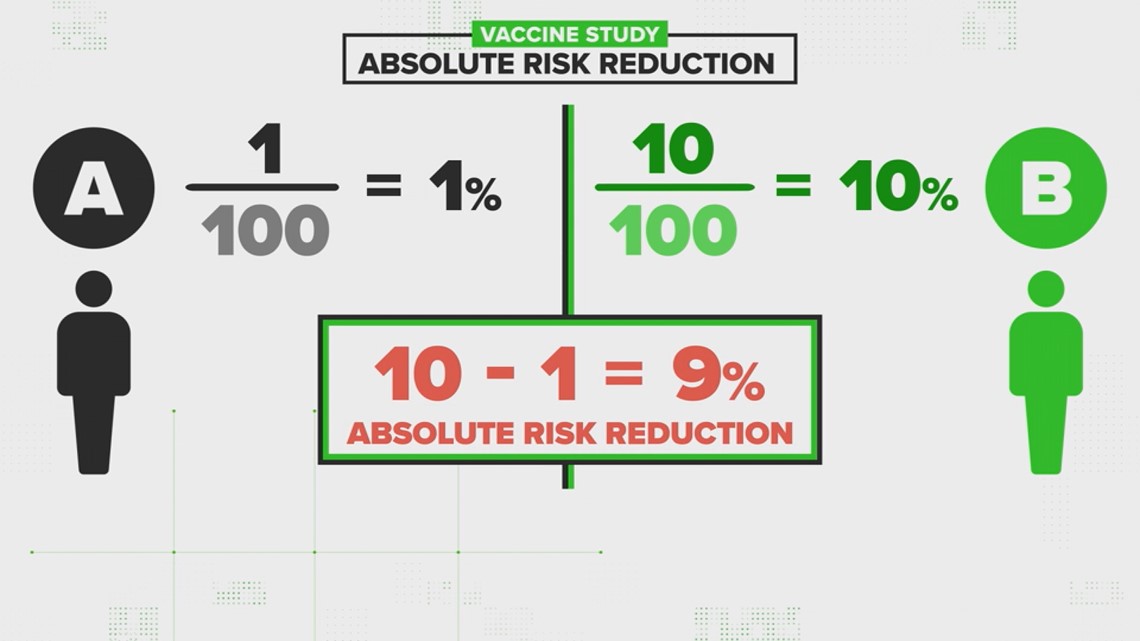

For Person A, the absolute risk is 1 out of 100, because just one person got sick in that whole group.

For person B, that absolute risk is 10 out of 100, because 10 people got sick.

If you compare those two numbers, that's a nine percent difference. That's what's called the absolute risk reduction.

Now let's talk about relative risk.

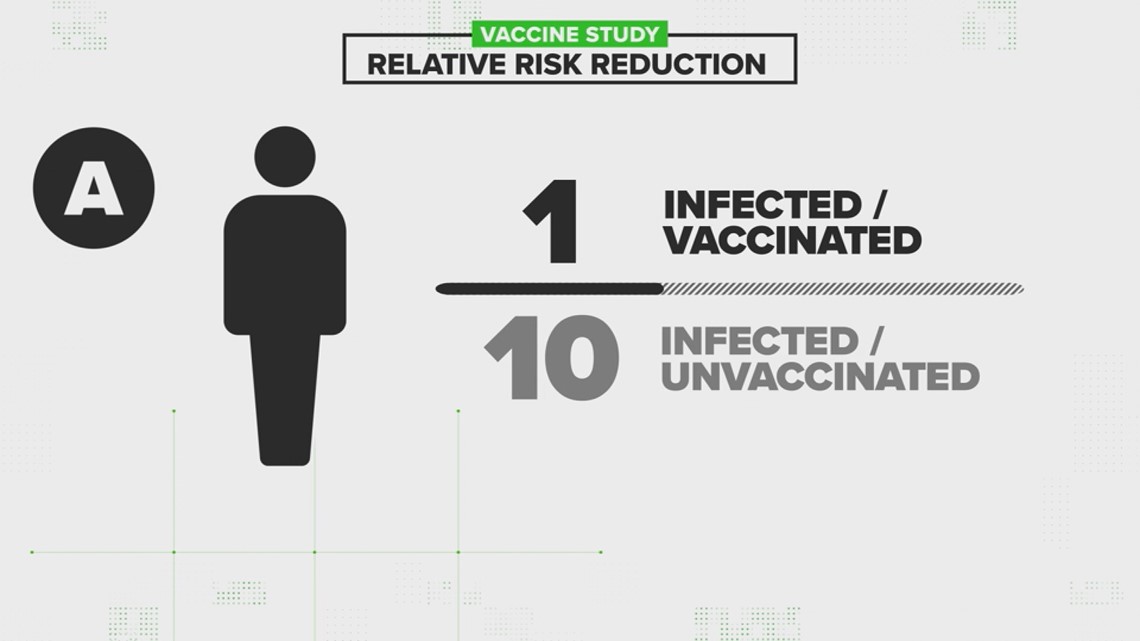

“Relative risk is asking 'what are my chances of getting infected relative to a vaccinated person?'" Kissler says.

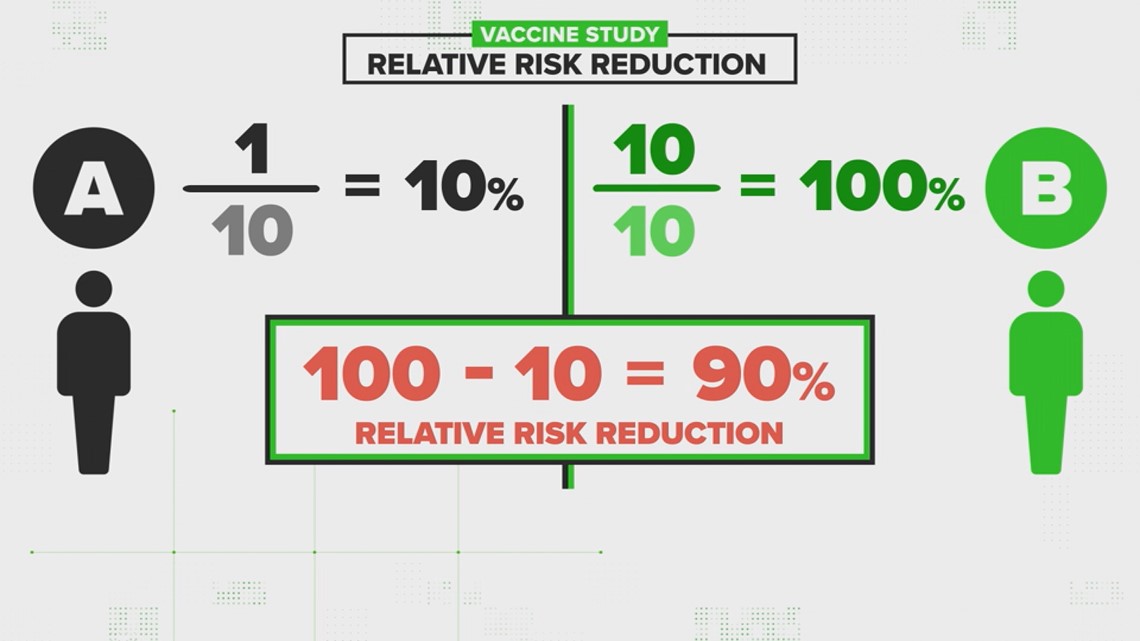

For this we compare our vaccinated person, Person A, with our non-vaccinated person, Person B.

For Person A, the relative risk is 1 out of 10, because just one vaccinated person got sick compared to 10 unvaccinated people that got sick.

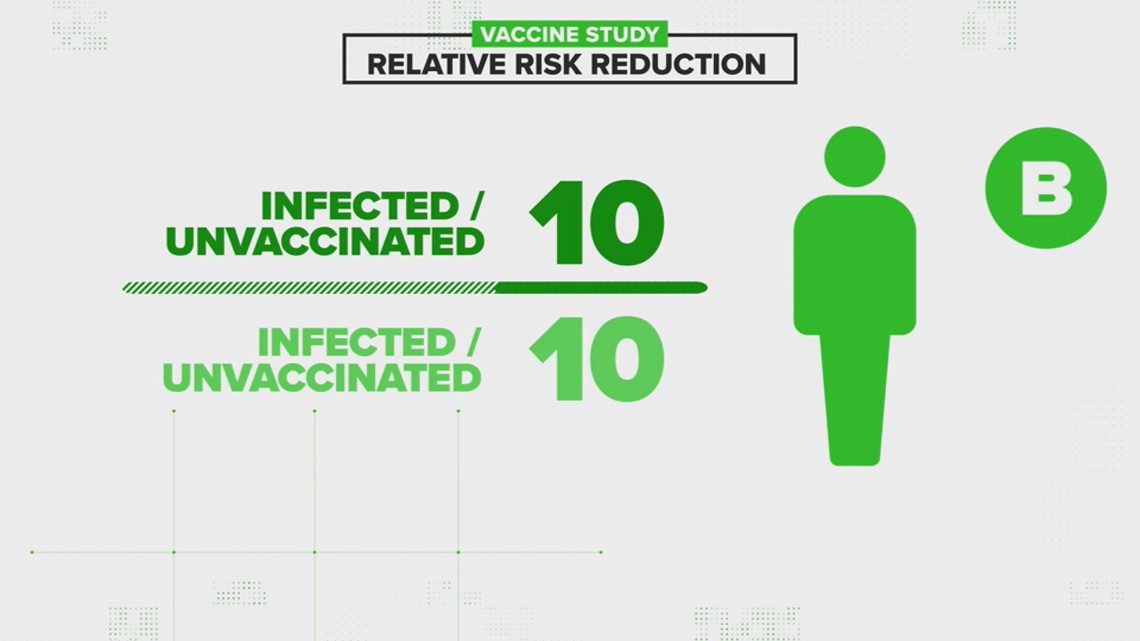

For Person B, the relative risk is 10 out of 10.

"For unvaccinated people, it's 10/10 (10 infections in the unvaccinated group vs 10 infections in the (same) unvaccinated group)," Kissler explained via email. "Because of this, the relative risk in the 'reference' group will always be 100%!"

If you compare those two numbers, that's a ninety percent difference.

"That's a lot higher, right...and that was the issue that the paper brought up, was that the relative risk calculation in this case is a lot higher," Kissler said. "And so what's correct? Should we be quoting 90%? Or should we be quoting 9%? Because those give you a very different intuitive feel for the difference in risk that you get for being vaccinated. Well, I think both are useful.”

Dr. Mokdad says its not at all unreasonable that the public is hearing about the relative risk reduction, rather than the absolute one. The decision is all about conveying a public health message, versus throwing numbers and percentages at the public. He says the goal of public health messaging is for information to connect with the public to help them best understand complex topics.

“If I come to you and explain to you the absolute difference in lung cancer if you smoke, [it] doesn't connect. But, if I come and tell you, 'if you smoke, that it's nine times more likely to get lung cancer,' then that's a public health message," Mokdad explains. "That's what we want is we want to connect with the public, and we scientists have to speak a language that connects to the public. If I can't explain it to somebody who is not an epidemiologist or a scientist, then I failed miserably.”

Our Verify researchers spoke with the author behind the scientific paper going around. He believes that the public has a right to know both numbers.